Voice AI Disaster Recovery and Failover Architecture for Enterprises

Enterprise voice AI systems require disaster recovery architectures that achieve sub-30-second failover without dropping active calls, using multi-region deployment, SIP trunk redundancy, and graceful degradation to human queues.

When a voice AI platform handles thousands of concurrent calls across a contact center, an infrastructure failure does not present as a dashboard alert — it presents as silence on the other end of a phone call. Unlike web applications where users can refresh a page, voice calls are stateful, real-time sessions. A dropped call cannot be retried transparently. This makes disaster recovery architecture for voice AI fundamentally different from standard application DR, requiring telephony-aware failover mechanisms, sub-second health detection, and degradation strategies that route to human agents rather than dead air.

For enterprise voice AI with automatic failover, 99.99% financially guaranteed uptime, and hybrid on-premise/cloud resilience, contact the Trillet Enterprise team.

What Makes Disaster Recovery Different for Voice AI?

Voice AI disaster recovery must account for real-time stateful sessions, telephony signaling, and sub-second failover requirements that standard application DR frameworks were not designed for.

Traditional web application DR operates on the assumption that requests are stateless and retryable. A user hitting a failed server can be rerouted to a healthy one, and the retry happens transparently. Voice AI violates every one of these assumptions:

Stateful sessions: A caller mid-conversation has context, intent history, and an active audio stream. Failover must either preserve session state or gracefully restart the interaction.

Real-time constraints: HTTP requests tolerate 5-10 seconds of failover latency. Voice calls tolerate less than 1 second of silence before the caller perceives a failure.

Telephony signaling: SIP sessions, RTP media streams, and PSTN connections operate independently from application-layer health. A healthy application behind a failed SIP trunk is unreachable.

Bidirectional audio: Unlike request-response patterns, voice AI maintains continuous bidirectional audio streams. Failover must handle media renegotiation without audible artifacts.

Regulatory state: In regulated industries, a failover event that routes calls to a different geographic region may violate data residency requirements mid-conversation.

These constraints demand DR architectures purpose-built for voice, not adapted from web application patterns.

What Are the Core DR Architecture Patterns for Voice AI?

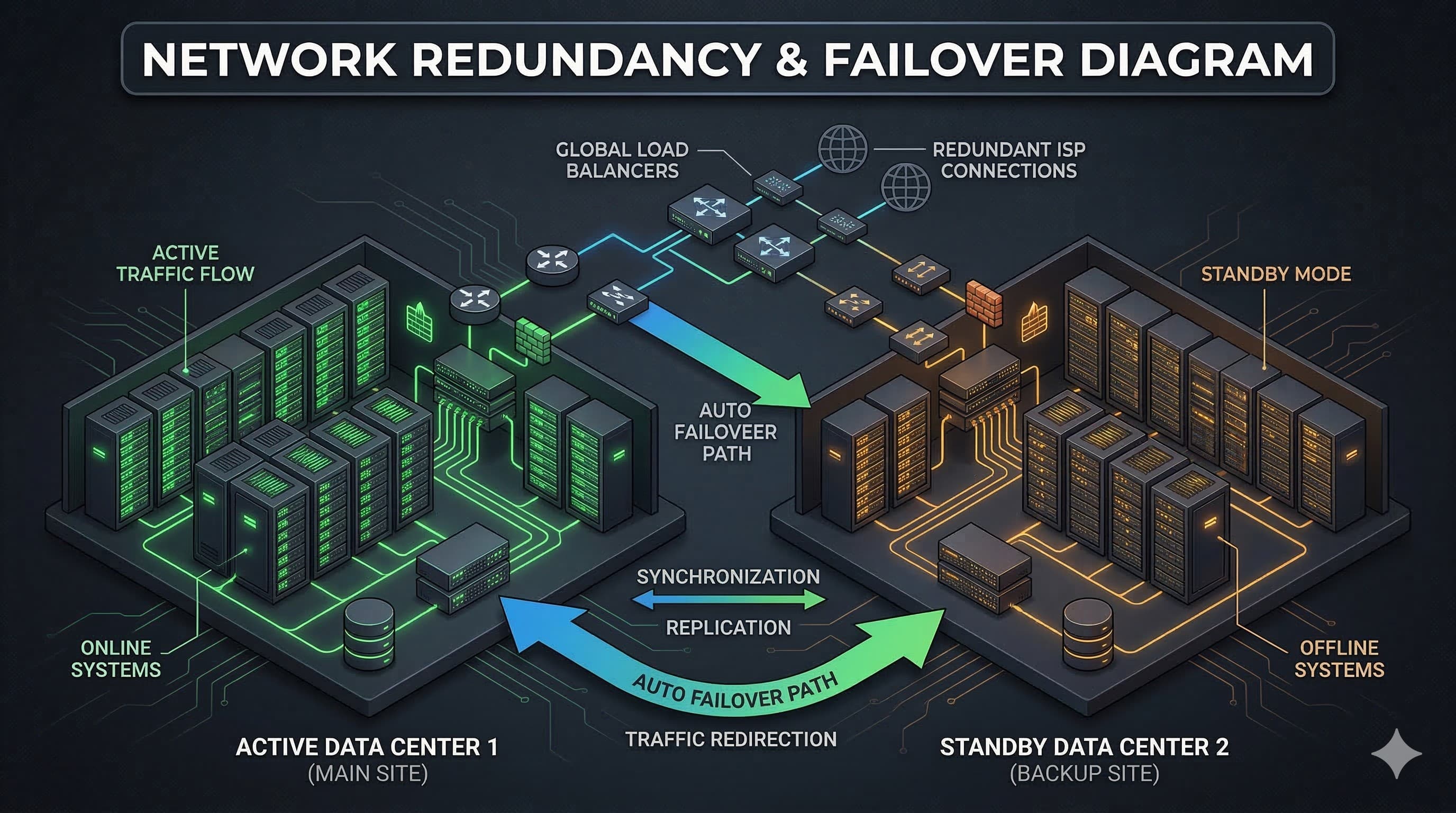

The three primary patterns — active-active, active-passive, and multi-region — each present distinct trade-offs between cost, complexity, and recovery speed.

Active-Active Architecture

In an active-active configuration, two or more deployment environments handle production traffic simultaneously. Incoming calls are distributed across all active nodes, and if one fails, the remaining nodes absorb the load without any failover delay for new calls.

Advantages:

Zero failover time for new calls (traffic is already distributed)

Load balancing improves performance under normal conditions

No cold-start latency when a secondary environment activates

Challenges:

Session state must be replicated in real-time across all nodes

Higher infrastructure cost (all environments run at production capacity)

Split-brain scenarios require careful handling during network partitions

Data residency compliance is more complex when traffic flows across regions

Active-active is the preferred pattern for enterprise voice AI deployments where any call interruption is unacceptable. Trillet's multi-region cloud deployment supports active-active configurations across APAC, North America, and EMEA regions.

Active-Passive Architecture

An active-passive configuration maintains a standby environment that activates only when the primary fails. The standby environment is pre-provisioned but does not handle production traffic during normal operation.

Advantages:

Lower cost (standby runs at reduced capacity)

Simpler data consistency (single active source of truth)

Clearer compliance posture (traffic routes to one known region)

Challenges:

Failover introduces delay (10-60 seconds depending on detection and activation speed)

Standby environment may have cold-start issues if not regularly exercised

Active calls during the failover window are typically lost

Active-passive works for organizations where occasional brief interruptions are acceptable and cost optimization is a priority.

Multi-Region Deployment

Multi-region architecture distributes voice AI infrastructure across geographically separated data centers, providing resilience against regional cloud outages, natural disasters, and localized network failures.

Advantages:

Survives entire region failures (which occur multiple times annually for major cloud providers)

Reduces latency for geographically distributed callers

Supports data residency requirements by routing calls to region-appropriate infrastructure

Challenges:

Cross-region state synchronization adds complexity and latency

DNS propagation delays can slow failover if not using active-active routing

Cost scales with the number of regions maintained

Comparison: DR Architecture Patterns for Voice AI

Attribute | Active-Active | Active-Passive | Multi-Region Active-Active |

Failover time (new calls) | 0 seconds | 10-60 seconds | 0 seconds |

Active call survival | High (with session replication) | Low (active calls typically dropped) | High (with cross-region replication) |

Infrastructure cost | 2x+ baseline | 1.3-1.5x baseline | 2.5-3x baseline |

Complexity | High | Moderate | Very high |

Data residency control | Moderate | High | High (with geo-routing) |

Regional outage resilience | Partial (same-region only) | Partial | Full |

Recommended for | High-volume contact centers | Cost-sensitive deployments | Mission-critical, regulated industries |

What Failover Mechanisms Protect Voice AI Systems?

Effective voice AI failover relies on layered mechanisms spanning telephony, network, and application tiers — each operating at different speeds and granularity.

SIP Trunk Failover

SIP trunks are the primary path between the PSTN and your voice AI infrastructure. Trunk failures are the most common cause of voice AI unavailability.

Enterprise configurations should implement:

Multiple carrier relationships: At minimum two SIP trunk providers with automatic failover. If Carrier A fails, calls route to Carrier B within seconds.

SIP 302 redirect: The primary trunk responds to new INVITE requests with a redirect to the backup trunk, enabling caller-transparent rerouting.

SIP DNS SRV records: Multiple SRV records with priority and weight values enable automatic trunk selection without application-layer intervention.

Trunk group monitoring: Continuous OPTIONS pings detect trunk failures before callers are affected, enabling preemptive rerouting.

DNS-Based Failover

DNS failover provides region-level rerouting by modifying DNS resolution to point traffic at healthy infrastructure.

Health-checked DNS: Services like Route 53 health checks or Cloudflare load balancing monitor endpoint health and update DNS records automatically.

TTL considerations: DNS TTL values for voice AI endpoints should be set to 30-60 seconds. Lower TTLs enable faster failover but increase DNS query volume.

Limitation: DNS failover does not save active calls. It only routes new calls to healthy infrastructure. For active call preservation, application-layer failover is required.

Load Balancer Health Checks

Application load balancers positioned in front of voice AI processing nodes provide the fastest failover for application-layer failures.

Health check endpoints: Dedicated /health endpoints that verify not just process liveness but actual call-processing capability (LLM connectivity, telephony gateway status, database reachability).

Check intervals: Health checks for voice AI should run every 5-10 seconds with a failure threshold of 2 consecutive failures, triggering node removal within 10-20 seconds.

Session-aware routing: Load balancers must support sticky sessions for active calls while routing new calls away from degraded nodes.

Graceful Degradation to Human Queues

The most critical failover mechanism for voice AI is not technical — it is operational. When AI processing fails, calls must route to human agents rather than dropping into silence.

Trillet's enterprise platform implements automatic failover from AI to ViciDial and PBX queues. If the voice AI cannot process a call — whether due to LLM provider failure, capacity exhaustion, or any processing error — the call transfers to a human agent queue with full context passed via CTI data. The caller experiences a brief hold rather than a disconnection.

This pattern follows a clear degradation hierarchy:

Primary: AI handles the call end-to-end

Degraded: AI handles initial greeting and intent capture, then transfers to human with context

Failover: Call routes directly to human queue with IVR-based initial routing

Emergency: Calls route to overflow numbers or voicemail

What RTO and RPO Targets Should Voice AI Systems Meet?

Voice AI systems require significantly more aggressive RTO targets than typical enterprise applications, with RPO varying based on what data is considered critical.

RTO/RPO Matrix for Voice AI Components

Component | Target RTO | Target RPO | Justification |

Telephony (SIP/PSTN) | < 10 seconds | N/A (stateless) | Callers perceive silence after 2-3 seconds |

AI processing (LLM inference) | < 30 seconds | 0 (stateless per-call) | Calls can queue briefly or degrade to human |

Session state | < 5 seconds | < 1 second | Active call context must survive failover |

CRM/integration layer | < 60 seconds | < 5 minutes | Calls can proceed without CRM data temporarily |

Call recordings | < 4 hours | < 1 minute | Recordings can be reconstructed from replicated audio |

Analytics/reporting | < 24 hours | < 1 hour | Non-real-time, can tolerate delayed recovery |

Configuration/routing rules | < 30 seconds | 0 (replicated) | Incorrect routing is worse than brief downtime |

For voice systems specifically, the critical RTO threshold is 30 seconds. Beyond that window, callers hang up, queued calls time out, and the business impact escalates rapidly. Trillet's 99.99% financially guaranteed uptime SLA is architected around these sub-30-second recovery targets.

RPO Considerations for Regulated Industries

In healthcare and financial services, RPO for conversation data carries compliance implications:

HIPAA: PHI in active conversations must not be lost during failover. Session state replication with encryption in transit is mandatory.

SOC 2: Audit logs of call events must survive failover without gaps. Write-ahead logging to replicated storage addresses this.

Data residency: RPO strategies that replicate data across regions must respect geographic constraints. A failover that replicates Australian call data to a US recovery site may violate data residency requirements.

Trillet's configurable data residency ensures that failover replication respects geographic boundaries, with recovery targets aligned to region-specific regulatory requirements.

How Does Hybrid On-Premise and Cloud Deployment Improve Resilience?

Hybrid architectures that combine on-premise Docker deployment with cloud infrastructure create a resilience model where neither environment is a single point of failure.

The hybrid DR architecture operates on a principle of environmental independence:

On-premise as primary, cloud as failover:

Voice AI runs on Docker containers within the enterprise data center

If on-premise infrastructure fails (power, network, hardware), calls route to Trillet's cloud infrastructure automatically

SIP trunk failover detects on-premise unavailability and redirects to cloud endpoints

Data residency is maintained because the cloud failover region matches the on-premise location

Cloud as primary, on-premise as failover:

Voice AI runs in Trillet's multi-region cloud

If the cloud region fails, calls route to on-premise Docker containers

The enterprise maintains minimal on-premise capacity for DR purposes

This pattern suits organizations that prefer cloud operations but require guaranteed availability

Dual-active hybrid:

Both on-premise and cloud handle production traffic simultaneously

Load balancing distributes calls based on capacity and latency

Either environment can absorb full load if the other fails

Maximum resilience at maximum cost and complexity

Trillet is the only voice AI platform that supports this hybrid model, because it is the only platform offering true on-premise deployment via Docker alongside multi-region cloud infrastructure.

Container Orchestration for On-Premise Failover

On-premise Docker deployments leverage Kubernetes for container-level DR:

Pod health monitoring: Kubernetes liveness and readiness probes detect container failures and restart them automatically, typically within 5-15 seconds.

Node failover: If a physical node fails, Kubernetes reschedules containers to healthy nodes in the cluster.

Rolling updates: Zero-downtime deployments ensure that software updates do not create DR events.

Persistent volume replication: Conversation state and configuration data replicate across nodes using distributed storage (Ceph, Longhorn, or cloud-provider CSI drivers).

How Should Enterprises Test DR Procedures for Voice AI?

DR plans that are not tested regularly are recovery plans in theory only. Voice AI DR testing requires telephony-aware chaos engineering that goes beyond standard infrastructure failure simulation.

Recommended DR testing practices:

Scheduled failover drills: Monthly automated failover tests during low-traffic periods, verifying that calls route correctly to secondary infrastructure.

SIP trunk failure simulation: Deliberately disable primary SIP trunks to verify automatic carrier failover and measure actual rerouting time.

AI processing failure injection: Simulate LLM provider outages to verify graceful degradation to human queues functions correctly.

Region evacuation tests: For multi-region deployments, simulate full region failure and measure time to full capacity recovery in the surviving region.

Data residency verification: During failover events, verify that call data routes to compliant storage and does not leak to unauthorized regions.

Load testing under failover: Verify that the surviving environment can handle full production load, not just its normal share.

Trillet's 24/7 onshore Australian management team conducts regular DR testing as part of enterprise managed service agreements, providing post-test reports documenting failover times, call completion rates during failover, and any identified gaps.

Key metrics to capture during DR tests:

Metric | Target | What It Measures |

Failover detection time | < 10 seconds | How quickly the system identifies a failure |

Call rerouting time | < 30 seconds | Time for new calls to reach healthy infrastructure |

Active call drop rate | < 5% | Percentage of in-progress calls lost during failover |

Degradation accuracy | 100% | Whether failed AI calls correctly route to human queues |

Data residency compliance | 100% | Whether failover respects geographic data constraints |

Time to full capacity | < 5 minutes | How long until the surviving environment handles full load |

What Compliance Considerations Apply During Failover Events?

Failover events can inadvertently violate compliance requirements if DR architecture does not account for regulatory constraints.

Data residency during failover: A voice AI platform that fails over from an APAC region to a US region may violate Australian data residency requirements. DR architecture must ensure that failover targets are within the same regulatory jurisdiction, or that cross-border data transfer agreements are in place before a failover event occurs.

Audit continuity: SOC 2 and HIPAA require continuous audit logging. A failover event that creates gaps in audit logs represents a compliance violation, not just an operational issue. Write-ahead logging with synchronous replication to the DR environment prevents audit gaps.

Encryption in transit during failover: SIP traffic rerouted to backup infrastructure must maintain TLS/SRTP encryption. Failover paths that fall back to unencrypted SIP create security exposures.

Notification requirements: Some regulatory frameworks require notification when processing moves to different infrastructure. DR procedures must include compliance notification workflows.

Trillet maintains SOC 2 Type II compliance and CREST-certified penetration testing across all deployment environments — primary and DR — ensuring that failover does not degrade the security posture.

Frequently Asked Questions

What is the acceptable RTO for enterprise voice AI?

For telephony and call routing, RTO should be under 30 seconds. Callers will tolerate a brief delay or hold music, but silence or disconnection beyond a few seconds results in abandoned calls. AI processing can tolerate slightly longer RTOs if graceful degradation to human agents is in place.

Can active calls survive a failover event?

With active-active architecture and real-time session state replication, active calls can survive failover with minimal disruption (brief audio interruption of 1-3 seconds). Active-passive architectures typically cannot preserve active calls. The trade-off is cost and complexity — session replication across regions requires significant infrastructure investment.

How does on-premise Docker deployment support DR?

On-premise Docker containers orchestrated via Kubernetes provide container-level self-healing (automatic restart of failed containers) and node-level failover (rescheduling to healthy nodes). Combined with cloud failover, on-premise deployment creates a hybrid DR model where neither the data center nor the cloud is a single point of failure. Contact Trillet Enterprise to discuss hybrid DR architectures for your deployment.

What happens to data residency compliance during a failover?

DR architecture must ensure failover targets reside within the same regulatory jurisdiction as the primary deployment. Trillet's configurable data residency supports region-locked failover, where APAC deployments fail over to APAC infrastructure, preventing cross-border data transfer violations during DR events.

How often should voice AI DR procedures be tested?

Monthly automated failover drills are the minimum recommended cadence, with quarterly full-scale DR exercises that include telephony failover, AI degradation testing, and compliance verification. Untested DR plans have a significantly higher failure rate during actual incidents.

Conclusion

Disaster recovery for enterprise voice AI requires purpose-built architecture that accounts for real-time stateful sessions, telephony signaling constraints, and sub-30-second recovery targets. The choice between active-active, active-passive, and hybrid cloud/on-premise patterns depends on your organization's tolerance for call interruption, budget for redundant infrastructure, and regulatory requirements for data residency during failover events.

The non-negotiable requirement across all patterns is graceful degradation: when AI fails, calls must route to human agents, not silence. Architecture that treats this as a first-class design concern, rather than an afterthought, is what separates enterprise-grade voice AI from platforms that work well until they do not.

Contact Trillet Enterprise to discuss disaster recovery architecture for your voice AI deployment, including hybrid on-premise/cloud configurations, multi-region failover, and financially guaranteed 99.99% uptime SLAs.

Related Resources:

Enterprise Voice AI Orchestration Guide - Complete guide to enterprise voice AI deployment

Voice AI 99.99% Uptime SLA Requirements - How to evaluate and enforce uptime commitments

On-Premise Voice AI Deployment via Docker - Technical guide to self-hosted voice AI infrastructure

Choosing Between Cloud, Hybrid, and On-Premise Voice AI - Deployment model comparison for enterprises

Voice AI for Australian Enterprises: APRA CPS 234 and IRAP Compliance - Regulatory compliance for Australian deployments